Analyzing open-ended feedback involves extracting meaningful insights from free-text survey responses, comments, and reviews. Unlike structured data, these responses require methods like thematic coding, sentiment analysis, and AI-driven tools to uncover trends, pain points, and opportunities. With the right approach and tools, companies can turn unstructured feedback into actionable insights that drive smarter decisions and improved experiences.

Data is everywhere. Ratings, star scores, NPS and CSAT all provide useful quantitative signals. But if you want to understand why a score is what it is, or what the underlying drivers are, you need open‑ended feedback. Words, stories, details: that’s where the real insight lies.

This article explores what open‑ended feedback is, how to analyse it, best practices, challenges, and tools that make the process scalable, accurate, and actionable.

What Does Open‑Ended Feedback Mean?

Open-ended feedback refers to responses in customer surveys or feedback systems that allow respondents to answer in their own words, rather than selecting from predefined options. In contrast to numerical scales (1–5, stars, etc.), multiple-choice, or yes/no options, open-ended questions invite respondents to explain, tell stories, highlight nuances, suggest improvements, and share emotions, among other things.

Transform Your Customers' Experiences

Create a bulletproof customer journey with tailored CX products and services that will foster loyalty and reduce churn.

Some typical features of open‑ended feedback:

- Unstructured text: “I loved the friendliness of the employees, but I waited too long for the service”

- Rich qualitative detail: concrete examples of interaction, suggestions, complaints, compliments

- Emotional content: tone, sentiment, praise, frustration

- New themes that the survey designer didn’t anticipate

Because open‑ended feedback questions allow for this richness, they help organisations capture more nuanced understanding and uncover issues or opportunities that numeric scales and star ratings may miss.

Examples of Open-Ended Feedback Questions to Gather Qualitative Feedback

Crafting the right open-ended feedback questions is key to gathering actionable, thoughtful feedback from customers, employees, or peers. Below are examples of open-ended customer survey questions across several common feedback scenarios.

1. Customer Experience (CX) Feedback

These questions help understand the nuances behind customer satisfaction, pain points, and expectations:

- What did you enjoy most about your experience with us today?

- What could we do better next time?

- Was there anything about your interaction that exceeded or fell short of your expectations?

- If you could change one thing about our service/product, what would it be and why?

- Can you describe a recent experience with our brand that stood out to you—positively or negatively?

These open ended feedback questions allow customers to highlight both operational issues and emotional responses that rating scales can’t capture.

2. Employee Feedback and Internal Surveys

Whether part of employee engagement surveys or feedback on internal initiatives, these questions help gather honest, detailed employee perspectives:

- What are the biggest obstacles you face in your role, and how could management support you better?

- How do you feel about the communication and collaboration within your team?

- What changes would you recommend to improve the work environment or team dynamics?

- Is there a recent project or initiative you believe could have been handled differently? Please explain.

- What motivates you to perform at your best, and what holds you back?

3. Open Ended 360 Feedback Questions

360-degree feedback processes are enriched by open-ended responses, as they provide insights into soft skills, leadership styles, and interpersonal impact that ratings alone can’t reflect. Some well-crafted open ended 360 feedback questions include:

- What are this person’s greatest strengths in their current role?

- In what areas could they improve or develop further?

- Can you share a time when this person had a significant positive impact on you or the team?

- What advice would you offer this person to help them grow professionally?

- How does this individual contribute to team morale or collaboration?

These questions are especially powerful in leadership development, peer reviews, and employee performance reviews, helping organisations understand not just how someone performs, but how they are perceived by those around them.

4. Product or Feature Feedback

When launching a new product or improving existing features, these questions help dig deeper into user preferences and usability:

- What do you like most (or least) about this feature?

- Were there any steps that felt confusing or unnecessary?

- What would make this product more useful or easier to use for you?

- If you’ve used similar products before, how does this compare?

- What problems are you trying to solve with our product, and how well does it help you do that?

How to Analyse Open‑Ended Feedback Responses?

Open‑ended feedback responses are powerful but more challenging to analyse because they are unstructured. Here is a sequence of steps one can follow to make sense of them:

1. Collection & Cleaning

- Ensure good quality in responses: prompt for enough detail, but not so long that people give up or write generic responses.

- Pre‑process text: remove typos, normalise spelling, remove irrelevant or low‑content responses, filter spam.

2. Categorisation / Thematic Coding

- Read through a sample and identify recurring themes or topics.

- Create a codebook or taxonomy: topics/categories under which responses can be grouped (e.g. “speed of service,” “staff friendliness,” “pricing,” “product quality,” etc.).

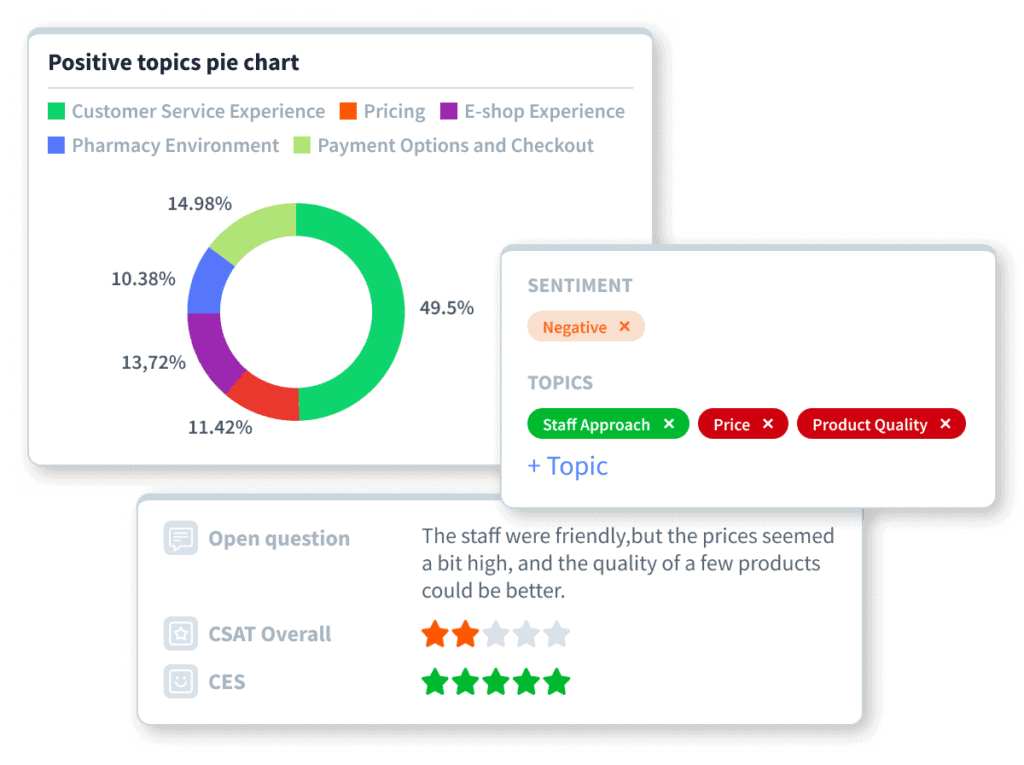

- Tag/code responses accordingly (manually or with the assistance of tools such as the AI Topic & Sentiment Analyser).

3. Sentiment Analysis

- For each response or each theme, identify whether the customer sentiment is positive, negative or neutral.

- If possible, detect degrees (mild complaint vs. severe dissatisfaction, praise vs. strong delight).

4. Frequency & Weighting

- Count how many responses fall under each theme. Which themes are most common?

- Weight by importance (if the survey asks for importance) or by impact (some themes may matter more to your objectives).

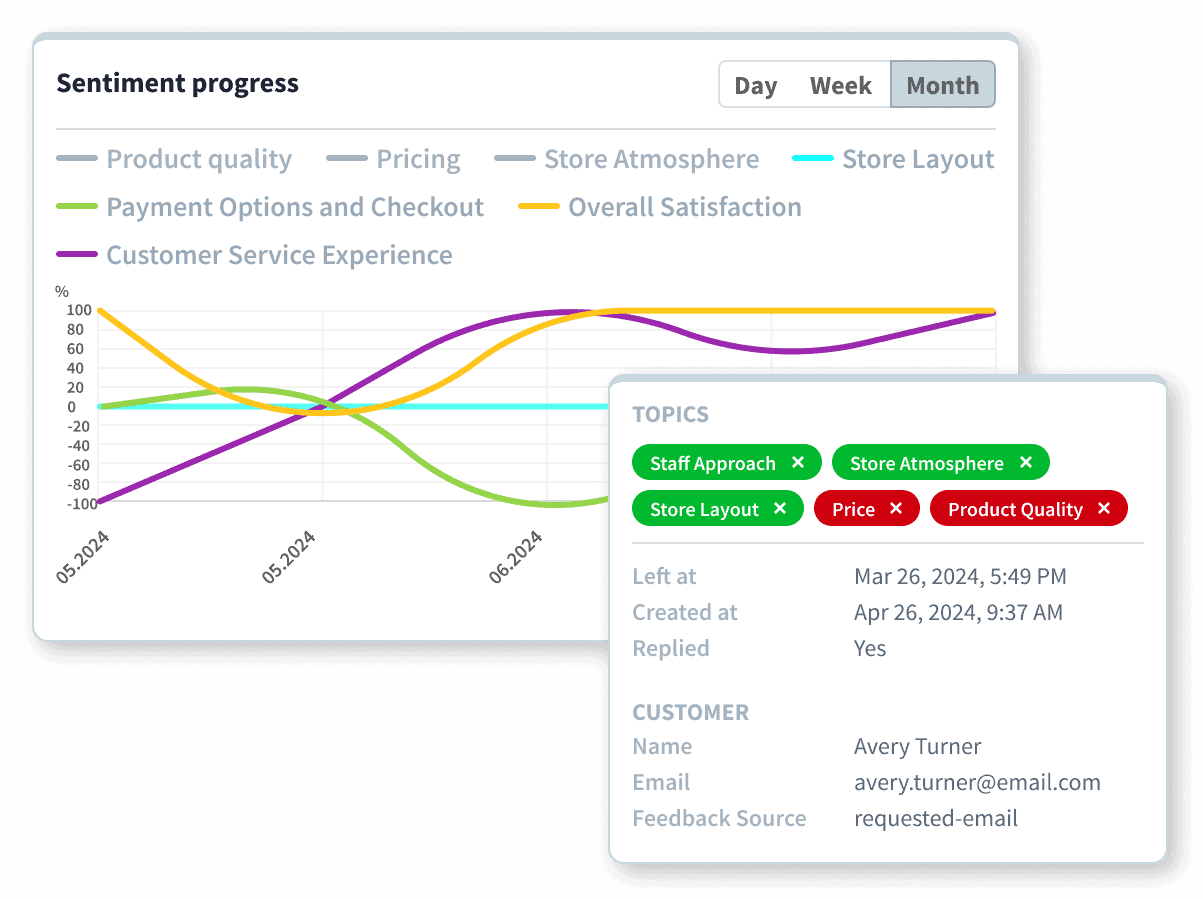

5. Trend Analysis / Longitudinal Study

- Compare over time: are some customer complaints increasing or decreasing? Are emerging topics arising that you didn’t account for before?

- Segment analysis: by customer demographics, by product line, by employee location/team, etc., to see how different groups respond differently.

6. Visualization & Reporting

- Use CX dashboards, word clouds, sentiment graphs, and theme breakdowns.

- Highlight actionable insights: what to fix, what to maintain, where to allocate resources.

7. Action / Close the Loop

- Make data-based decisions: Use insights to inform changes.

- Close the loop: Respond to respondents (if feasible), especially on issues they raised.

- Track improvement: Monitor whether changes lead to improved customer satisfaction.

Get Actionable Insights with Closed Loop Feedback Management

With Staffino, you'll never leave a customer unhappy again! Streamline the process of collecting and responding to feedback, identify areas of improvement, and make sure that customer issues are addressed quickly and effectively.

What Are the Best Methods to Analyze Open‑Ended Consumer Feedback?

Here are some best practices, tools and methods for analyzing open-ended consumer feedback effectively:

Method | When to use | Strengths | Limitations |

Manual coding / human thematic analysis | When the sample size is small, or for validating themes, the initial survey design | Deep nuance, good for exploratory, high accuracy | Time‑intensive, hard to scale, subjectivity/coder drift |

Rule‑based / keyword matching | If themes are fairly narrow and predictable (e.g. “price”, “delivery”, “quality”) | Fast, interpretable, easy to implement | Misses synonyms, context, brittle, less sensitivity to nuance |

Machine‑learning / NLP methods (topic modelling, clustering) | For larger datasets, exploring latent topics, discovering unexpected patterns | Scalable and fast, can uncover themes human coders didn’t think of | Requires care; interpretability; sometimes noisy; may need human validation |

Sentiment analysis (basic or aspect‑based) | Always useful to attach sentiment; especially important for customer service and EX | Helps quantify how respondents feel, not just what they talk about | Older sentiment tools can misinterpret sarcasm, domain‑specific language, and mixed sentiment in one response |

Mixed human + AI / automated tools | For scaling, speed, consistency, keeping human oversight ensures quality | Best of both worlds: speed + nuance; allows real‑time insights | Costs; setup (taxonomy, training) required; ongoing tuning; risk of over‑automation |

Best practice also includes validation (human checking), regularly updating the taxonomy or codebook to reflect new themes, and ensuring feedback is actionable.

Are Open‑Ended Feedback Questions Harder to Interpret?

Yes, open ended questions for feedback are harder, but definitely not impossible to interpret—and the payoff tends to justify the extra effort or money spent on professional AI feedback analysis tools.

Challenges include:

- Volume: Many responses; reading all manually is unscalable.

- Ambiguity: Respondents may be vague, write about multiple issues in one answer, mix praise and criticism, or mention things that the survey didn’t anticipate.

- Language variety: Typos, slang, colloquialisms, different languages or dialects, mixed languages.

- Sentiment mixed in one response: For example, “I love the product but hate how long I had to wait.”

- Subjectivity in coding/interpretation: Two human coders might interpret the same text differently.

However, with good design, the right AI tools, and effective processes (such as taxonomy, human review, and quality control), these challenges can be effectively managed. The result is richer, more actionable insight that pure numeric feedback cannot provide.

Can ChatGPT Analyse Survey Results?

Short answer: yes, to some extent. But for production‑grade, scalable, consistent, domain‑aware analysis, specialised feedback analytics platforms tend to be much more reliable.

Here’s a breakdown:

ChatGPT (or similar large language models) can help you summarise, classify, and even generate sentiment scores for open‑ended feedback if you prompt it well. It can help you brainstorm categories, identify emerging themes, or draft summary reports.

But there are limitations:

- It may not be trained on your specific domain (product, customer base, local language nuances).

- It may produce inconsistent classifications over time.

- Privacy, data security, auditability—in many businesses, you need specialised tools that are secure, traceable, and that integrate with your feedback systems.

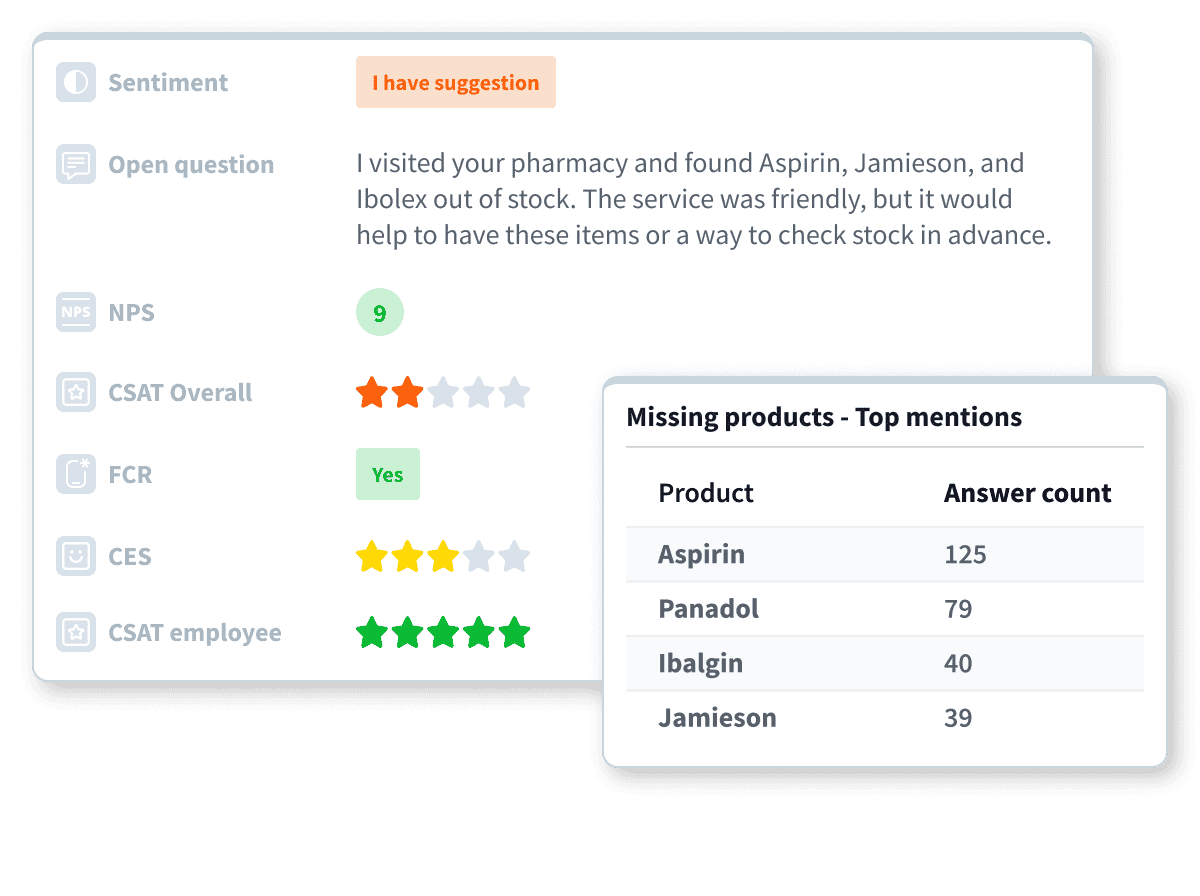

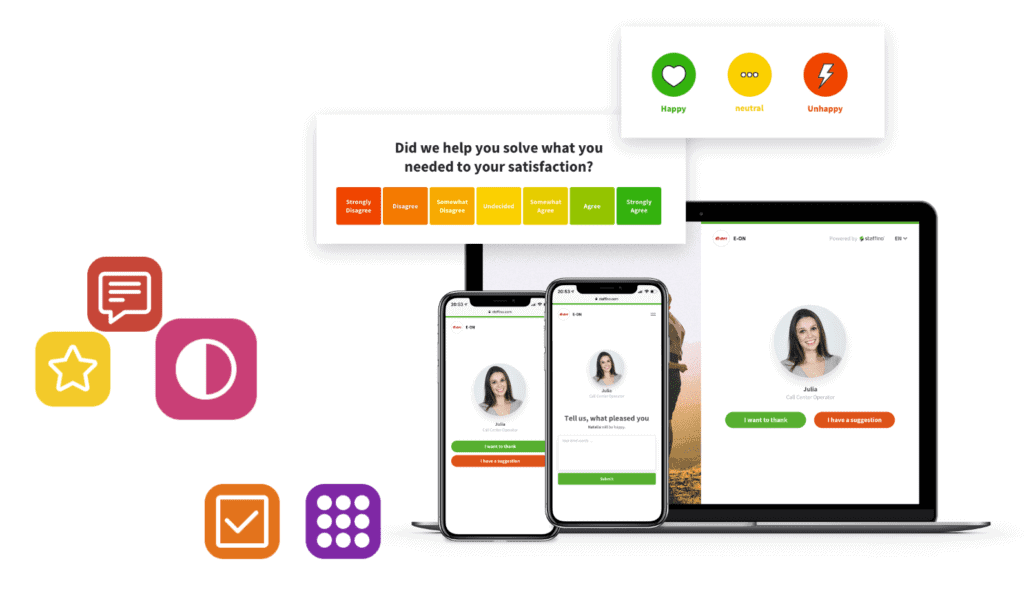

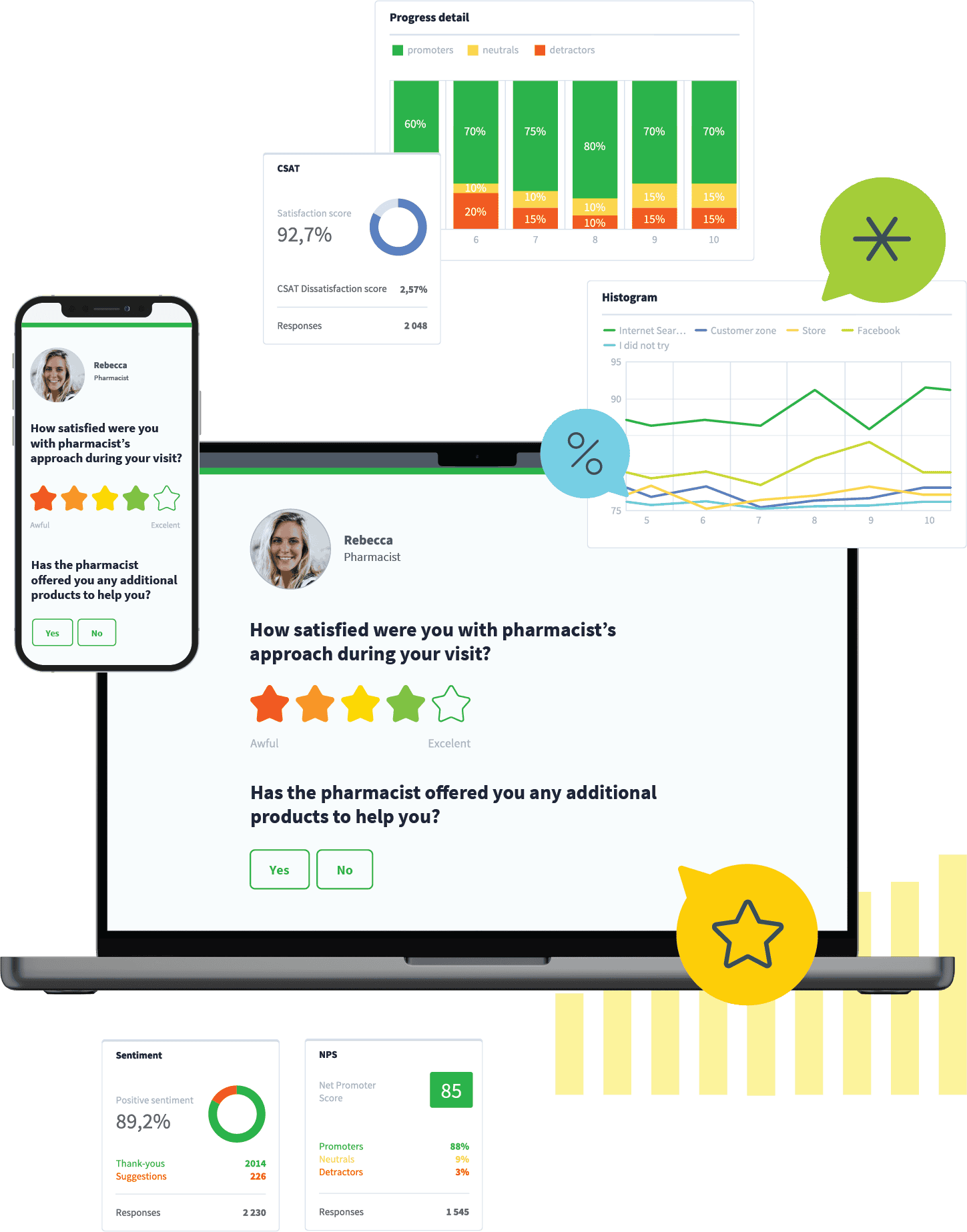

That’s where Staffino’s AI feedback tools come in. Staffino offers an AI Topic & Sentiment Analyser, among other features, designed precisely for analysing open‑ended feedback. Its models are trained on thousands of real feedback data, across voice and text, with best practices in taxonomy, topic classification, and sentiment scoring.

It supports multiple languages, handles large volumes, and integrates seamlessly into dashboards. Using Staffino means high precision for theme detection, sentiment analysis, and topic categorisation, so businesses don’t have to reinvent or validate every step themselves. Staffino’s CX management platform is purpose‑built for reliability, scale, integration, and action.

Why Open‑Ended Feedback Matters (Versus Purely Numeric and Star Ratings)

It’s worth emphasising what you gain by investing in open‑ended feedback and analyzing it properly:

1. Understanding the “Why” Behind the Number

A star rating of 4/5 or CSAT 80% tells you how people feel in aggregate, but not why they feel that way.

2. Identifying Unexpected Issues or Hidden Insights

Sometimes customers (or employees) bring up issues you didn’t anticipate — things you didn’t include in your closed‑ended options.

3. Showing That You’re Listening

For feedback to build trust, respondents want to feel heard, and allowing them to speak freely increases satisfaction. Also, when you respond to feedback or show you acted on it, you close the feedback loop, which strengthens loyalty.

4. Differentiation & Competitiveness

Companies that understand and act on detailed feedback can better refine service, product, and culture. Competitor benchmarking (public reviews, etc.) often relies on getting details—not just scores. Staffino’s AI Public Reviews & Competition Analyzer helps here.

5. Better Decision‑Making

When leadership sees trends in qualitative feedback, they can allocate resources more effectively: training, process improvements, staff adjustments, and product design.

6. Improved Employee Experience & 360 Feedback

For internal feedback, open ended employee feedback solutions allow people to express what standard ratings can’t capture: cultural issues, communication breakdowns, leadership style, innovation ideas, etc.

How to Interpret Survey Results Examples

Let’s walk through a hypothetical but realistic example of how you might interpret survey results, including open‑ended feedback.

Company X runs a post‑purchase customer survey: it includes a star rating (1–5), “Would you recommend us?”, and an open‑ended question: “What could we have done better today?”

The numeric data shows: average rating 4.2/5, NPS +20, most people say “yes” to recommend. So far, fairly good. But:

- The open feedback reveals that many complaints centre on delivery delays, packaging damage, difficulty reaching customer support, plus several comments appreciating the friendliness of employees.

- Breaking down by region, “delivery delays” appear much more often in some regions. In others, it’s much less.

- Customer sentiment analysis shows that among comments about delivery, negative sentiment is strong; among employee friendliness, positive but moderate.

From this, Company X can decide to:

- Prioritise logistics/delivery process improvements in the regions where delays are flagged.

- Consider revising packaging or handling to reduce damage.

Possibly adjust customer support capacity or channel access (e.g. live chat) in certain regions. - Also, communicate back to customers what changes are being made (closing the loop) to maintain trust.

You might also look at change over time: did delivery complaints increase after a new shipping provider was engaged? Did staffing changes in the warehouse affect things?

Best Practices & Tips for Analysing Open Ended Feedback

- Balance open and closed questions: too many open questions can reduce response rates; use numeric questions to quantify, open ones to explore.

- Maintain and update your taxonomy/themes periodically: languages, trends, and expectations change.

- Use quotes judiciously in reports: meaningful, vivid quotes bring data to life.

- Segment your feedback: by demographics, time, product lines, etc.

- Validate automated analysis with human oversight, especially at early stages or when making major decisions.

Staffino’s Approach to Open‑Ended Feedback

Since many of the challenges lie in scale, consistency, speed, and domain specificity, tools like Staffino’s are designed to address exactly those.

The main AI features of our customer experience platform include:

AI Topic & Sentiment Analyser

AI customer feedback semantic analysis automatically identifies what customers are talking about and how they feel. It supports both text and voice feedback.

AI Insight Summariser

AI Insight Summariser by Staffino converts large volumes of open‑ended feedback into structured, actionable dashboards, summaries, and reports. It enables you to understand open ended employee feedback and customer feedback quickly and effortlessly, helping prevent damage to company reputation or employee attrition.

AI Public Reviews & Competition Analyser

Staffino’s AI Public Reviews & Competition Analyser is useful for monitoring what people are saying about your brand in public review platforms (Google, Trustpilot, etc.), comparing with competitors. It helps uncover external sentiment and themes you may not see in your internal feedback.

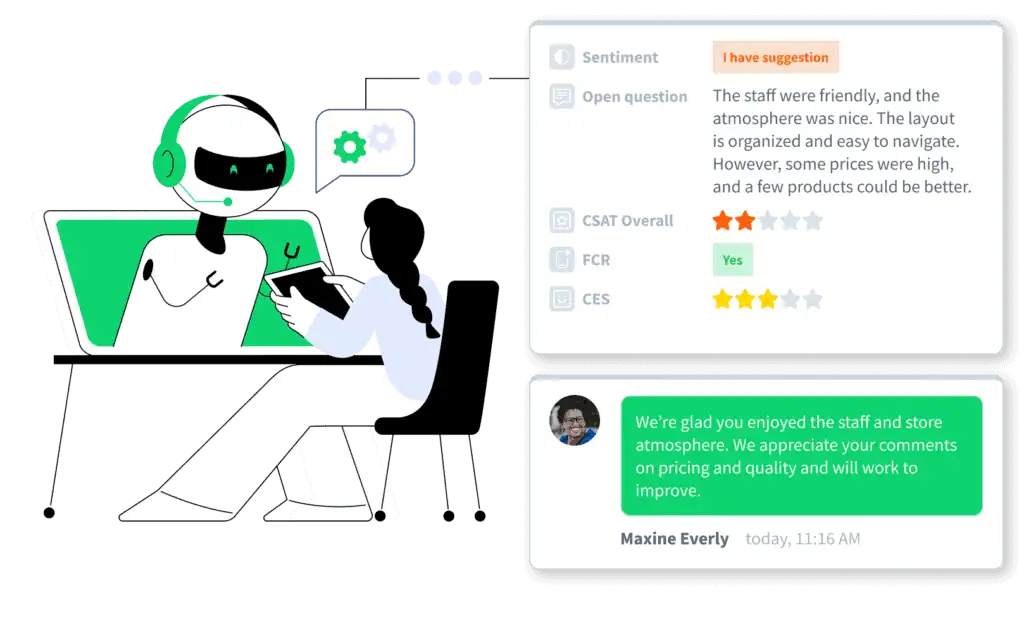

AI Feedback Responder

Once you know what people are saying, responding is part of closing the feedback loop. Staffino’s AI feedback response bot helps generate personalised, context‑aware responses to open‑ended feedback, automating routine cases while allowing escalation when needed.

These tools are trained or built on thousands of feedback data points, with curated taxonomies and domain‑specific tuning, which help avoid common pitfalls (misclassification, misunderstanding sentiment, and so on).

Start Asking Smart Open-Ended Questions with Staffino Today

Open‑ended feedback is a rich, often under‑utilised source of insight. It carries the voices, stories, complaints, praise, and ideas that numeric metrics can’t fully express. Yes, analysing it is more work (or at least requires better tools), but the return on that investment is high: better customer satisfaction, more engaged employees, smarter decision making, and competitive advantage.

If you want to understand why satisfaction is where it is, act on what people are really saying, and use feedback as a lever for change (not just measurement), then integrating open‑ended feedback questions and having robust methods or tools to analyse them is essential.

Get a First-Hand Experience Today!

Staffino is the perfect tool for creating engaging surveys, tracking performance, responding to customer feedback, and rewarding top employees. Get started today with our FREE demo!

FAQ

Open-ended feedback is qualitative feedback provided in the respondent’s own words, rather than selecting from predefined answers. It allows individuals to express thoughts, feelings, suggestions, and detailed experiences, offering deeper insight than closed-ended questions.

Open-ended questions are prompts that encourage detailed, descriptive responses. They typically begin with what, how, why, or describe, allowing respondents to explain their views rather than choose from set options.

- What did you like most about your experience with us?

- How can we improve our service?

- Why did you choose our product over others?

- Describe any challenges you faced while using our service.

- What suggestions do you have for future improvements?

“The checkout process was fast and easy, but I struggled to find product reviews on your website. A review section would really help before making a purchase decision.” This response gives insight into both strengths and areas for improvement.

Analyzing open-ended responses typically involves categorizing themes (topic coding), assessing sentiment, identifying trends, and extracting key phrases. This can be done manually, with rule-based systems, or using AI-powered tools for large-scale feedback.

The most effective methods include thematic coding, sentiment analysis, aspect-based sentiment scoring, topic modeling (e.g. clustering), and AI-based feedback analytics tools. Combining automated analysis with human oversight ensures both accuracy and contextual understanding.

Survey results should be interpreted by combining quantitative metrics (e.g. NPS, star ratings) with qualitative insights from open-ended responses. For example, if a customer rates a 4/5 but comments on poor delivery, the rating alone doesn’t reflect dissatisfaction. Context is key.

Yes, open-ended responses are more complex to interpret due to their unstructured nature, varying detail levels, and language nuances. However, they offer significantly richer insights when analyzed properly.

ChatGPT can help summarize or categorize survey feedback, but it’s not specifically trained on large-scale, domain-specific feedback data. For highly accurate and consistent analysis, purpose-built tools like Staffino’s AI Feedback Topic & Sentiment Analyzer are recommended. Staffino’s tools are trained on thousands of real feedback cases and deliver precision tailored for customer and employee experience contexts.

The top tools for analyzing open-ended feedback include:

Staffino (a leading platform offering AI-driven topic detection, sentiment analysis, and insight summarization, trained on thousands of real feedback entries.), Qualtrics Text iQ, Medallia, MonkeyLearn, NVivo (for academic/research settings), Microsoft Power BI (when combined with NLP add-ons).